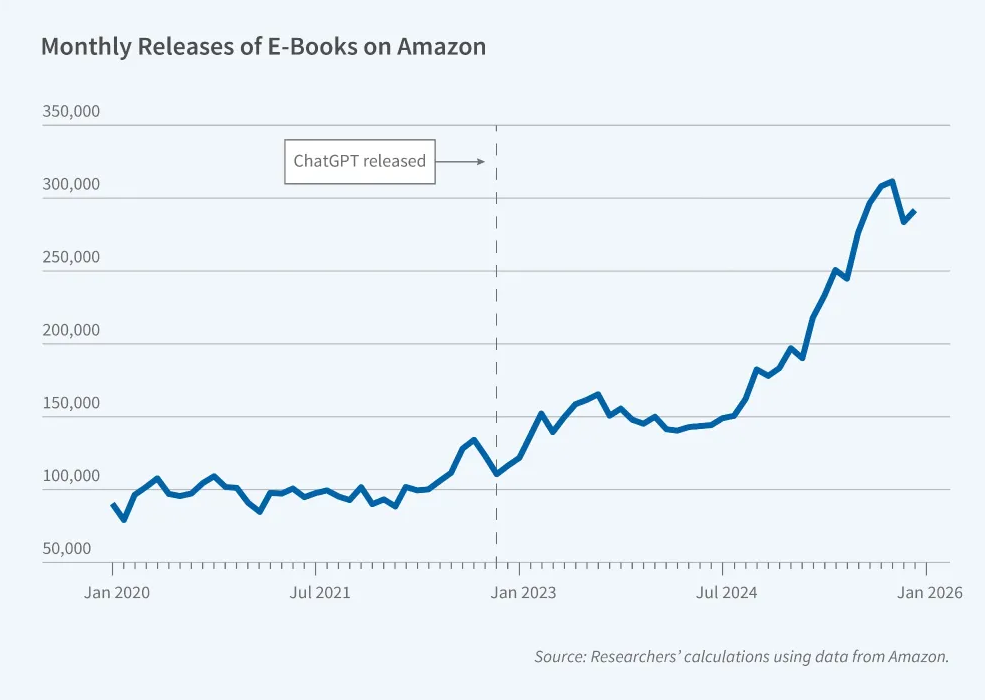

Similarly, a report from Deezer last year showed that over 50,000 GenAI tracks are uploaded every day to the music streaming platform. 50k! Every single day! We’re literally drowning in culture, eh? People use AI to generate entire bands for Spotify, then use bot farms to drive up the streams to get paid. Humans have been cut out of the loop entirely! What a time to be alive.

Forgive me for going Full LinkedIn, but this really did get me thinking about my job… and communications, and social media, and marketing, and content, and the arts. In a world in which there is an abundance of almost everything arts-related (music, visual imagery, video, literature) the only things there aren’t an abundance of are authenticity and human creativity – and they become even more valuable as a result. We need to remember this, and be prepared to swim against the tide to defend it.

This also got me thinking (stay with me here…) about mortality. The Venn diagram of tech bro billionaires who are interested in ‘longevity research’ (for which read: living for an incredibly long time beyond the expected human life-span) and who are interested in GenAI output more or less replacing human output, is a circle. I don’t think that’s a coincidence.

I’m old fashioned in that I think the thing which gives life *meaning* is it that it’s finite. When you have an infinite supply of something – be that literature, music, or even immortality itself – the overall value of it inevitably goes down. But there is (forgive me, again) an opportunity here: to define ourselves through opposition. To take the artisanal route, rather than hanging onto the coattails of mass production.

If we end up in this landscape where 90% of what we consume is just AI slop, that means genuine cultural experiences will become incredibly valuable. Not to everyone, but to some people – and I think those people are the ones interested in arts, and culture, and education, and progress. This is how we can stand out, this is how we can engage. Do you want to be the 6 millionth account to post some second-rate GenAI imagery? Wouldn’t you rather be the exception that only posts genuine pictures? Wouldn’t you rather be part of the group who proactively reclaim music, and literature, and videography, and even something as relatively prosaic as a social media post, as something of value - and nurture that?

The social media accounts I run for my org don’t use any GenAI music, imagery or video. We post less than we would if we did use that stuff, because it certainly speeds things up. It is incredibly quick to produce marketing materials with GenAI. But most of them are basically rubbish. The frictionlessness of GenAI somehow seeps through – and no one really cares or learns, or remembers. And of course, many people will completely write you and your content off if you use GenAI in your posts or your slides or your website or your newsletter. They feel that if you aren’t prepared to create content yourself, they shouldn’t have to consume it either. They feel – whether you intend this or not – like they you are treating them with contempt when you use GenAI.

Some people will be fine with all AI slop, all the time – but a lot of people won’t. Creativity is no longer technically required to write books or music, but it is required for OUR sake as humans. We will increasingly need to reclaim art as more than just background noise and filler. We need to reclaim the ACT of creation as valuable, not just the product. And as everything else gets watered down and diluted into meaninglessness, human experiences and connectivity become more valuable than ever. Write that music. Write that book. Write that social media post using your own brain and your own words.

Don’t give in to the idea that GenAI is an inevitable, unstoppable force: challenge all of those people who say ‘AI is here now - you can’t put the toothpaste back in the tube!’. To quote my friend Simon Bowie:

“When I accidentally squeeze out too much toothpaste, I don't then shove it all in my mouth. I wash it down the plughole.”